If you give a large language model a browser, it will click. If you give it a faster browser, it will click faster. But the next order-of-magnitude speedup won’t come from making a single path faster; it will come from exploring multiple paths in parallel and committing only what you can prove is safe.

This article proposes a concrete architecture for speculative multi-path browser agents. The core ideas:

- Forked tabs from a shared starting point using DOM snapshots, storage copies, and offscreen contexts.

- Branch-and-bound search to explore multiple plausible actions in parallel, within a time/compute budget.

- Causal telemetry to attribute downstream improvements to upstream actions and score branches.

- Exactly-once writes via an intent ledger and policy gates that separate “decide” from “do,” and prevent duplicate or unsafe operations.

We will cover the why, the architecture, code snippets, pitfalls, and evaluation. You should leave with a blueprint you can implement in a week and refine over months.

- Why speculation for browser agents

Web agents are slowed by two fundamental phenomena:

- Latency variance: The web is slow and unpredictable: network RTTs, script execution, anti-bot deferrals, and rendering time dominate. Waiting serially for each step—LLM propose -> click -> wait -> parse—burns wall-clock time.

- Branch uncertainty: LLMs produce a distribution over next actions. The top-1 action can be wrong, and recovering from a bad click incurs extra steps and page loads.

Speculative execution is how CPUs beat these issues. They predict branches and execute ahead; mispredictions are rolled back, and correct guesses retire. Browser agents can mirror this:

- Predict top-K plausible actions (clicks, fills, navigations) and fork off each in an isolated context.

- Run them a short distance (L steps) to gather evidence.

- Score and prune aggressively, limiting compute.

- Commit only one branch’s writes; discard or roll back the rest.

The twist: the web has side effects. Our design makes them explicit and controllable.

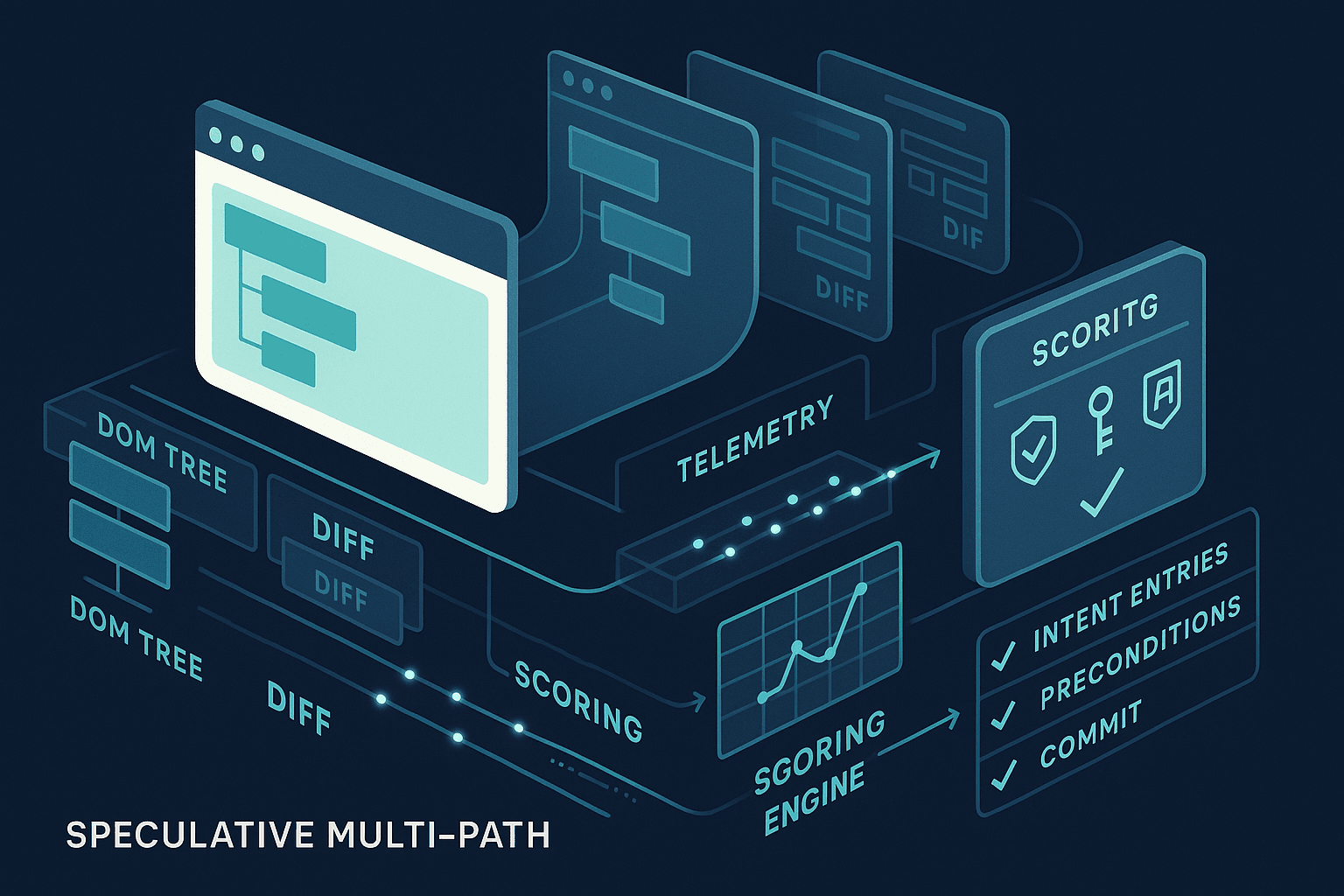

- Architecture overview

The system is composed of four planes:

- Speculation plane: propose, fork, and explore branches in parallel.

- Telemetry plane: capture causal, structured signals from pages and actions.

- Decision plane: score branches, prune, and select a winner.

- Commit plane: represent writes as intents, gate them by policy, and ensure exactly-once execution.

Key components:

- Orchestrator: manages budgets and the branch-and-bound loop.

- Session cloner: materializes forked tabs via DOM snapshots, cookie/localStorage copies, and network stubbing.

- Branch runner: executes an LLM-guided action sequence for a branch.

- Telemetry bus: gathers DOM diffs, network traces, performance timings, composes a causal graph.

- Scoring engine: a learned or rule-based heuristic that produces a scalar utility for each branch.

- Intent ledger: an append-only log of proposed operations with preconditions and idempotency keys.

- Policy gates: allow/deny commits based on risk, ABAC, or human-in-the-loop.

- Commit executor: applies the winning intent set with exactly-once semantics.

- Forked tabs via DOM snapshots and storage cloning

Forking a browser session has three constraints:

- State fidelity: We need DOM contents, form inputs, scroll positions, cookies, localStorage/sessionStorage, and sometimes in-page JS state.

- Isolation: Forked branches must not leak writes to each other or the main session.

- Speed: Forking must be near-instant, or we burn the benefit of speculation.

Practical tactics (Chrome DevTools Protocol/CDP + Playwright):

- DOM snapshots: Use CDP DOMSnapshot.captureSnapshot to serialize the DOM and layout. This gives you a static view including nodes, attributes, text, and computed styles. It does not fully capture JS heap or timers (that’s fine for short lookahead).

- Storage cloning: Copy cookies via Network.getCookies + Network.setCookies on a new target. Mirror localStorage/sessionStorage with Storage.getStorageKeyForFrame and Storage.getEntries, then set via Runtime.evaluate.

- Offscreen contexts: Launch branch pages in headless/offscreen contexts via Target.createTarget with "background": true (or hidden windows in Playwright) to reduce rendering overhead.

- Network stubbing: Intercept requests (Fetch domain or Playwright route) to force cache hits, block analytics, and optionally replay from a deterministic cache recorded at the parent state. For speculative steps, favor GETs; block POST/PUT/DELETE until commit.

- Scroll and viewport: Replicate viewport size and scroll offsets via Page.getLayoutMetrics and Runtime.evaluate to set window.scrollTo.

Limitations and workarounds:

- JS heap: We don’t clone arbitrary JS heap. Instead, we short-circuit by simulating common interactions (clicking links, expanding accordions) where effects are DOM-local. For dynamic apps, we constrain lookahead depth L to avoid diverging too far without a real reload.

- Event timing: Freeze animations/timers via requestAnimationFrame shims or throttling. For evaluation, rely on DOM diffs and network traces rather than exact replay.

- Cross-origin iframes: Use OOPIF-aware enumeration (Target.getTargets) to snapshot leaf frames separately. Some cross-origin frames cannot be fully mirrored; accept partial fidelity or fall back to validating the winning branch live.

- Branch-and-bound exploration loop

A simple algorithmic skeleton:

- Input: State S0 (page + storage), goal G, budget B (time/compute), beam width K, lookahead L.

- Loop: propose top-K actions from S0; fork K branches; advance each up to L steps; capture telemetry; score; keep top-k′ branches; repeat from best branch state until success or budget exhaustion.

- Guarantee: do not perform writes during speculation; represent them as intents.

Pseudocode (TypeScript-ish):

tsinterface BranchState { id: string; parentId?: string; depth: number; tab: BrowserContext; // or Playwright Page snapshotId: string; // reference to base DOM/storage snapshot actions: Action[]; // steps taken so far telemetry: Telemetry; utility: number; // score intents: Intent[]; // staged writes only } async function speculativePlanAndAct(S0: Snapshot, G: Goal, B: Budget) { const queue = new BoundedPriorityQueue<BranchState>(B.beamWidth); const root = await forkFromSnapshot(S0); root.utility = baselineScore(root, G); queue.push(root); const start = Date.now(); while (!queue.empty() && Date.now() - start < B.wallMs) { const branch = queue.popMax(); if (isGoalSatisfied(branch, G)) return commitPhase(branch); const proposals = await proposeActions(branch, G, B.topK); const children: BranchState[] = []; for (const action of proposals) { const child = await forkBranch(branch); // cheap clone of storage + DOM basis const res = await runActionSafely(child, action, {blockWrites: true}); child.actions.push(action); child.telemetry = await collectTelemetry(child); child.utility = score(child, G); children.push(child); } const survivors = prune(children, B.beamWidth); for (const s of survivors) queue.push(s); } // Fallback best-effort commit if any branch has high confidence intents const best = queue.peekMax(); return commitPhase(best); }

Keys to making this fast and safe:

- runActionSafely enforces a write firewall. Intercept network via Fetch or Playwright route: allow idempotent GET/HEAD, sandbox POST/PUT/DELETE into a virtual sink that creates Intent objects instead of hitting the network.

- forkBranch should be O(10–100ms). Pre-warm contexts, reuse processes, and lazy-apply DOM snapshots (only differences).

- prune uses a composite score with strong regularization against long, meandering branches.

- Causal telemetry: attribute, then score

Naively scoring by “does the screen look closer to the goal?” fails on modern web apps. We need a causal view: which action produced which change and why it matters.

Signals to collect per branch:

- DOM deltas: node additions/removals, attribute changes, text diffs; semantic extraction of headings, buttons, form labels.

- Network traces: requests/responses, status codes, content types; mark requests causally triggered by a given click via the CDP Initiator field.

- Performance timings: navigationStart, FCP/LCP, TTI approximations; long tasks (>50ms) counts.

- Selector stability: how stable are discovered element locators across rerenders (CSS/XPath scoring, data-testid presence)?

- Goal proximity: embedding similarity between textual goal description and extracted page content; schema matching for target entities (e.g., “price”, “availability”, “order status”).

- Risk indicators: presence of sensitive forms, captchas, hidden iframes, payment flows.

Causal graph construction:

- Build a DAG where nodes are Actions, DOM mutations, and Network requests; edges represent causality (e.g., click -> XHR -> DOM mutation of .results-list).

- Use CDP’s Page.lifecycleEvent, Network.requestWillBeSent.initiator, and Performance.getMetrics to wire edges.

- For SPAs, attribute Virtual DOM patches by correlating microtask timestamps with event handlers invoked after action.

Scoring function example:

tsfunction score(b: BranchState, G: Goal): number { const t = b.telemetry; const proximity = embedSim(G.text, t.extractedText); const delta = Math.min(1, t.domDeltaSize / 2000); // scaled change magnitude const requestQuality = sum(t.requests.map(r => quality(r))) / (t.requests.length + 1); const selectorStability = median(t.selectorStability); const riskPenalty = risk(t) ? -1.5 : 0; const depthPenalty = -0.1 * b.depth; return 2.0 * proximity + 0.7 * delta + 0.5 * requestQuality + 0.3 * selectorStability + riskPenalty + depthPenalty; }

quality(req) could favor 2xx status, HTML/JSON content types, and penalize analytics/ads. embedSim can be a small vector model to keep it cheap.

The goal is not perfect grading; it’s fast triage. You will validate the winner with a live replay anyway.

- Exactly-once writes via an intent ledger

Speculation must not cause double payments, duplicate emails, or spurious sign-ups. We split “decide” from “do” using a two-phase approach:

- Phase A (speculate): Convert any write attempt into a structured Intent and block the actual network write. Example intents:

- FormSubmitIntent(formId, fields, targetUrl)

- PurchaseIntent(cartId, items, total, currency)

- ApiMutationIntent(endpoint, method, bodyHash)

- Phase B (commit): After selecting a winning branch, validate preconditions using fresh reads, acquire a commit token (lease), and execute the write exactly once using idempotency keys and anti-duplication checks.

Intent structure:

tsinterface IntentBase { intentId: string; // UUID v7 site: string; // eTLD+1 type: 'FormSubmit' | 'ApiMutation' | 'Purchase' | ... createdAt: string; preconditions: Precondition[]; // e.g., cart has N items, price unchanged effects: Effect[]; // expected postconditions or heuristics idempotencyKey: string; // stable across retries and branches risk: RiskProfile; // estimated risk, PII, payment provenance: { branchId: string; actions: Action[]; telemetryHash: string; }; }

Ledger semantics:

- Append-only, tamper-evident: store in a write-once log (e.g., Kafka topic + immutable store) with cryptographic hash chaining for audit.

- Uniqueness: enforce unique (site, idempotencyKey) pairs; duplicates reject at the gate.

- Precondition checks: execute reads to confirm intent is still valid, else require replan.

- Leases: when committing, mark intent as COMMITTING with a short lease to avoid split-brain.

Policy gates:

- ABAC/RBAC rules (OPA/Rego or Cedar): Who/what can commit this class of intent? Under what conditions (e.g., transaction total <= $100, vendor in allowlist)?

- Risk thresholds: If risk > X, require human approval.

- Rate limits and budgets: Global per-site QPS limits, daily caps.

- Sanitizers: Strip PII where possible; never store full card data.

Commit executor:

- Apply with real network calls in a clean, non-speculative context derived from the winning branch’s latest known state; include idempotencyKey in headers or hidden fields.

- Verify effect: Confirm the expected state change; reconcile with ledger (mark COMMITTED) or handle COMPENSATION if necessary.

- Implementation blueprint (Playwright + CDP, Node/TypeScript)

7.1) Process model

- One browser process, multiple contexts: a base context for S0, K speculative contexts per iteration, recycled aggressively.

- A pool of branch runners (N workers) bound by CPU/IO budget.

- A telemetry collector service (WebSocket/GRPC) to ingest CDP events.

- A small vector embedding service for goal proximity.

- A ledger service (HTTP/GRPC) fronting an append-only store.

7.2) Session cloning and DOM snapshotting

tsimport { chromium, BrowserContext, Page, request } from 'playwright'; import { CDPSession } from 'playwright-core'; async function captureSnapshot(page: Page) { const cdp = await page.context().newCDPSession(page); const { documents, strings } = await cdp.send('DOMSnapshot.captureSnapshot', { computedStyles: ['display', 'visibility', 'opacity'], includeDOMRects: true, includePaintOrder: false }); const cookies = await page.context().cookies(); const storage = await page.evaluate(() => ({ local: Object.entries(localStorage), session: Object.entries(sessionStorage) })); const scroll = await page.evaluate(() => ({ x: window.scrollX, y: window.scrollY })); return { snapshot: { documents, strings }, cookies, storage, scroll }; } async function forkFromSnapshot(browser, baseSnapshot) { const ctx = await browser.newContext({ viewport: { width: 1366, height: 768 }, userAgent: 'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 Chrome/121 Safari/537.36', }); const page = await ctx.newPage(); // Load a local about:blank and hydrate storage first await page.goto('about:blank'); await ctx.addCookies(baseSnapshot.cookies); await page.evaluate((st) => { localStorage.clear(); sessionStorage.clear(); st.local.forEach(([k,v]) => localStorage.setItem(k, v)); st.session.forEach(([k,v]) => sessionStorage.setItem(k, v)); }, baseSnapshot.storage); // Option A: Re-navigate to the live URL and let cookies/storage take effect (safe, slower) // Option B: Render a static clone offscreen for quick DOM lookahead (fast, limited) return { context: ctx, page }; }

In practice, you’ll combine Option A and B: do fast static lookahead in an offscreen clone, and revalidate the winner by reloading the live URL in the commit context.

7.3) Network firewall and intent capture

tstype Intent = FormSubmitIntent | ApiMutationIntent | PurchaseIntent; function installFirewall(page: Page, intents: Intent[]) { page.route('**/*', async (route) => { const req = route.request(); const method = req.method(); const url = req.url(); // Allow idempotent reads if (method === 'GET' || method === 'HEAD' || method === 'OPTIONS') return route.continue(); // Convert writes into intents, don't send const body = req.postData() || ''; const intent = toIntent(method, url, body); intents.push(intent); return route.fulfill({ status: 202, body: 'Speculative-Write-Captured' }); }); } function toIntent(method: string, url: string, body: string): Intent { const idempotencyKey = stableHash([method, url, body]); // Heuristics: classify form vs API if (/\/checkout|payment|card/.test(url) || /"card"|"cvv"/.test(body)) { return { intentId: uuidv7(), type: 'Purchase', site: etld1(url), createdAt: new Date().toISOString(), preconditions: [], effects: [], idempotencyKey, risk: { level: 'high' }, provenance: {/*...*/} } as PurchaseIntent; } return { intentId: uuidv7(), type: 'ApiMutation', site: etld1(url), createdAt: new Date().toISOString(), preconditions: [], effects: [], idempotencyKey, risk: { level: 'medium' }, provenance: {/*...*/} } as ApiMutationIntent; }

7.4) Telemetry collection and causal graph

tsasync function attachTelemetry(page: Page, sink: (e: TelemetryEvent) => void) { const cdp = await page.context().newCDPSession(page); await cdp.send('Network.enable'); await cdp.send('Performance.enable'); await cdp.send('Page.enable'); cdp.on('Network.requestWillBeSent', (e) => sink({ type: 'request', e })); cdp.on('Network.loadingFinished', (e) => sink({ type: 'response', e })); cdp.on('Page.lifecycleEvent', (e) => sink({ type: 'lifecycle', e })); // DOM mutation sampling via MutationObserver injected script await page.addInitScript(() => { const obs = new MutationObserver((mutations) => { window.__emit?.({ type: 'domMutation', payload: { time: performance.now(), count: mutations.length }}); }); obs.observe(document, { subtree: true, childList: true, attributes: true, characterData: true }); }); await page.exposeFunction('__emit', (payload) => sink(payload)); }

Build a small library to stitch events into a per-branch DAG keyed by action IDs and CDP initiator stacks.

7.5) Proposer: LLM action generator

Use a constrained grammar to produce top-K actions with rationales, e.g., click(selector), fill(selector, value), press(key), goto(url). Feed it a distilled page state: list of visible buttons/links with text, forms with labels and empty fields, headings, and the goal.

Prompt sketch:

You are a web agent. Given the goal and page artifact below, propose up to K next actions.

- Prefer obvious progress toward goal.

- Avoid any finalizing purchase or destructive actions; represent them as Intent(type=Purchase, ...).

Output JSON actions only.

Goal: {G}

Artifact: {summary_of_DOM_and_affordances}

Ensure the proposer is temperature-controlled and returns ranked, diverse actions. Optionally, fine-tune on BrowserGym/MiniWoB++-style tasks.

7.6) Commit phase: revalidation and execution

tsasync function commitPhase(winner: BranchState) { // 1) Synthesize intents from winner const intents = consolidateIntents(winner.intents); // 2) Policy gate for (const intent of intents) { const allowed = await policyCheck(intent); if (!allowed) throw new Error('PolicyDenied: ' + intent.intentId); } // 3) Precondition revalidation in fresh context const live = await forkFromSnapshot(/* winner.base or reload site */); await live.page.goto(winnerFinalUrl(winner)); const stillValid = await checkPreconditions(live.page, intents); if (!stillValid) throw new Error('StalePreconditions'); // 4) Acquire lease and record PENDING in ledger for (const intent of intents) { await ledger.append({ ...intent, state: 'PENDING' }); const lease = await ledger.lease(intent.intentId, 15000); if (!lease.granted) throw new Error('LeaseDenied'); } // 5) Execute exactly-once (with idempotencyKey) await installWriteThrough(live.page, intents); // allow writes now, inject idempotency headers await replayFinalActions(live.page, winner.actions); // 6) Verify effects and finalize ledger const ok = await verifyEffects(live.page, intents); for (const intent of intents) { await ledger.setState(intent.intentId, ok ? 'COMMITTED' : 'FAILED'); } return ok; }

installWriteThrough should add headers like Idempotency-Key: <key> for API calls, or append hidden inputs in forms. For third-party sites without idempotency support, emulate with a client-side deduplication token (e.g., unique memo field) and reconcile by reading back state (e.g., order number) before finalizing.

- Case study: Finding a product and adding to cart

Goal: “Find the Anker 737 power bank and add one to the cart on example-retail.com.”

-

Proposals:

- A1: Focus search bar, type “Anker 737 power bank”, press Enter.

- A2: Click “Power Banks” category, then filter by brand “Anker”.

- A3: Navigate to /search?q=anker+737 directly.

-

Fork K=3 branches and advance L=2 steps each:

- B1 (A1): Search executed; XHR to /api/search; DOM delta: results grid appears; top card matches query. Utility high.

- B2 (A2): Category page loads; filter drawer toggles; results unrelated; utility moderate.

- B3 (A3): Direct URL; SSR results return; similar to B1 but slower; utility slightly lower.

-

Prune to B1; next proposals:

- A4: Click first result with title containing “737”.

- A5: Sort by relevance.

-

B1->A4: PDP loads; “Add to Cart” button visible; speculative click produces ApiMutationIntent(POST /cart/add, bodyHash=...). Firewall captures intent; utility very high but risk medium.

-

Decision: Select B1 path. Commit phase revalidates PDP, replays Add to Cart with Idempotency-Key, confirms cart count increment and line item present. Ledger marks COMMITTED.

-

Other branches are discarded; no writes emitted. Wall time savings: instead of two serial missteps, speculation got to PDP faster and ensured a safe single write.

- Performance engineering

- Context pooling: Maintain a warm pool of offscreen contexts pre-initialized with cookies and blank pages to cut fork latency to 10–30ms.

- Lightweight diffs: Use a robust DOM differ (e.g., tree fingerprinting) vs full snapshots each step. Emit structural hashes per subtree (e.g., SimHash) for O(Δ) scoring.

- Budget-aware branching: Dynamically adapt K and L based on observed entropy of the proposer and recent utility gains. If the proposer is confident (entropy low), shrink K.

- Speculation window: Don’t speculate too far ahead. For high-risk flows (payments), cap L at 1–2 and rely on commit validation.

- Cache and replay: Record network GETs for the parent state (response body + headers) and serve them to speculative branches from a cache for near-instant load while preserving DOM fidelity.

- Reliability and determinism

- Deterministic seeds: Fix random seeds for proposer LLM sampling; use stable sorting for equivalent actions.

- Trace and replay: For debugging, record CDP event streams and network. Provide a replay mode to reconstruct a branch offline.

- Failure isolation: If a branch crashes (e.g., due to anti-bot), quarantine signals from it to avoid polluting scores.

- Health backoffs: If the site shows bot mitigations (interstitials, captchas), drop to K=1 and human-in-the-loop.

- Safety, security, and compliance

- CSP and sandboxing: Use per-branch isolated storage partitions. Never share authenticated cookies across unrelated goals.

- PII handling: Redact unless essential; encrypt at rest; implement data retention windows.

- Payment card rules: Never store raw PAN/CVV. For real transactions, integrate with PCI-compliant tokenization or require explicit human approval.

- Robots.txt and terms: Respect site policies; speculation should not overload servers—apply thoughtful rate limiting and polite backoffs.

- Integrating LLMs effectively

- Toolformer discipline: Constrain outputs to a closed action schema; validate selectors against the current DOM; auto-repair with heuristics (e.g., try nearest clickable ancestor).

- Curriculum: Train/fine-tune on synthetic sites (MiniWoB++), semi-realistic benchmarks (WebArena, BrowserGym), and your own telemetry-logged sessions.

- Uncertainty-aware branching: Use the proposer’s logprobs/entropy to decide K. If top-1 confidence > 0.8, explore fewer branches.

- VLM hybrid: For canvas-heavy or image-rich pages, use a lightweight vision encoder to label affordances, but keep DOM as the primary control surface.

- Evaluation and benchmarking

Metrics:

- Success rate: fraction of tasks completed.

- Time-to-first-commit (TTFC): wall time until winning branch commits.

- Steps-to-goal: actions taken excluding speculative dead ends.

- Duplicate-write incidents: should be zero; audit via ledger.

- Cost per task: CPU time, GPU time, network egress.

Suggested benchmarks and datasets:

- MiniWoB++: fast iteration on forms/buttons.

- WebShop/WebArena: realistic e-commerce and open web navigation.

- BrowserGym: standardized tasks and telemetry hooks.

A/B design:

- Baseline: serial top-1 agent with the same LLM and action schema.

- Variants: beam widths K ∈ {2, 4, 8}, lookahead depths L ∈ {1, 2, 3}; scoring function ablations; with/without DOM caching.

Expected outcomes (indicative):

- TTFC reduction by 30–60% on navigational tasks.

- 10–20% absolute success gains on ambiguous flows where top-1 is frequently wrong.

- Zero duplicate writes with intent ledger enabled.

- Related work and inspirations

- Speculative execution: CPU branch prediction and reorder buffers.

- Chrome Speculation Rules API: prefetch/prerender heuristics; conceptual kin for safe speculative loads.

- CDP DOMSnapshot and performance domains: the nuts and bolts of state capture.

- Exactly-once semantics: distributed systems patterns—idempotency keys, leases, Sagas—and event sourcing/append-only ledgers.

- Web agent benchmarks: MiniWoB++, WebArena, BrowserGym.

- Practical pitfalls and guardrails

- Anti-bot systems: Parallel branches might look like automation. Randomize timings, respect rate limits, and consider residential proxies. Fall back to serial mode when challenged.

- Stateful SPAs: Cloning can drift if JS heap contains opaque state. Keep lookahead shallow and revalidate live before committing.

- Element volatility: Don’t overfit to brittle selectors. Prefer text+role queries, data-testid, and robust anchor strategies; maintain a selector confidence score.

- Hidden writes: Some sites mutate state on blur or focus. Treat unexpectedly triggered POSTs as intents; ratchet risk and isolate the branch.

- Mobile vs desktop: Be explicit about UA and viewport; mismatches will burn budget.

- A minimal checklist to ship v1

- Action schema and proposer with top-K output

- DOM snapshot and storage clone

- Network firewall that turns writes into intents

- Telemetry pipeline (DOM deltas, network, timings)

- Scoring function with goal proximity, risk, and depth penalties

- Branch orchestrator with beam K and lookahead L

- Intent ledger with idempotency keys and leases

- Policy gates (OPA/Rego) and human review path

- Commit executor with verification

- Benchmarks and dashboards for TTFC, success, duplicates

- Looking ahead: making it smarter

- Learned scoring: Train a ranker on historical branch telemetry labeled by success to improve pruning.

- Pattern memory: Build site-specific playbooks from successful sessions and retrieve them as priors for the proposer and scorer.

- Partial heap capture: Explore JS heap snapshotting (Chrome Heap Profiler) for particular frameworks to improve fidelity.

- Intent CRDTs: For collaborative agents, consider CRDT-like merge semantics for non-destructive writes (e.g., comments), still gated by policy.

- Causal reinforcement: Use uplift modeling to estimate which actions create the largest expected gain in utility.

Conclusion

Speculation gives browser agents the same weapon that made CPUs fast: doing more than one thing at once and discarding the losers quickly. The web’s side effects and variability mean we can’t copy the microarchitecture literally, but with forked tabs, DOM snapshots, causal telemetry, and an intent ledger, we can approximate the spirit safely.

This architecture is simple enough to implement today with Playwright and CDP, but rich enough to grow into a production platform. Start with K=2, L=1, a basic scorer, and a strict ledger; measure, ablate, and iterate. You’ll see meaningful speedups without sacrificing safety—and you’ll build the foundation for agents that learn from their own branches.