Why build a synthetic web farm for browser agents?

Because the open web is a hostile training ground. It is dynamic, personalized, cookie-gated, ad-injected, and rife with anti-automation countermeasures. For researchers and engineers training agents to operate real UIs, you need environments that are rich and diverse, but also deterministic, reproducible, labelable, and safe. A synthetic web farm delivers that: a large set of procedurally generated websites with realistic interaction patterns, embedded ground-truth task graphs, auto-labeled DOM and event traces, randomized skins and layouts, and hermetic snapshots you can ship to any compute cluster. This article outlines a practical, opinionated blueprint for building such a farm end-to-end, from UI generators to RLHF and off-policy evaluation.

References worth knowing for context include MiniWob++ (micro web tasks), WebArena (open-world browsing tasks), Mind2Web (human demonstrations), and recent browser-agent frameworks built on Playwright, Selenium/WebDriver, or Chrome DevTools Protocol (CDP). The approach below borrows ideas from these but leans into hermeticity, task-graph supervision, and procedural diversity aimed at robust, scalable training.

Table of contents

- Design goals and constraints

- Architecture overview

- Procedural UI generation

- Ground-truth task graphs

- Auto-labeling DOM and event traces

- Skin randomization, layout perturbations, and accessibility

- Hermetic snapshots for reproducibility

- Training pipelines: RLHF and OPE

- Evaluation metrics and curricula

- Pitfalls, anti-patterns, and validation

- Roadmap and extensions

Design goals and constraints

Before writing code, set your bar for success:

- Deterministic: Same seed yields the same page tree, styles, and behavior. Episodes are replayable bit-for-bit.

- Realistic: Include forms, carts, tables, modals, search, pagination, drag-and-drop, shadow DOM, virtualized lists, nested scrolling, toasts/snackbars, and async effects.

- Task-grounded: Every environment encodes a set of solvable tasks as an explicit DAG with preconditions and validations.

- Auto-labeled: DOM nodes, events, and network traffic are logged and mapped to task semantics without human annotation.

- Skinnable: Randomize typography, spacing, colors, iconography, language, and LTR/RTL without breaking semantics.

- Hermetic: Zero external dependencies at runtime, frozen time, seeded RNGs, and Content Security Policy to prevent leaks.

- Agent-friendly: Offer structured observation channels (e.g., DOM snapshots with semantic locators) and standardized action schemas (click, type, select, drop, scroll).

- Scalable: Export thousands of sites as static artifacts deployable across clusters, with compact logging and standardized schemas.

Opinion: You should treat hermeticity and task graphs as non-negotiable. Without them, evaluating agents or debugging regressions becomes guesswork.

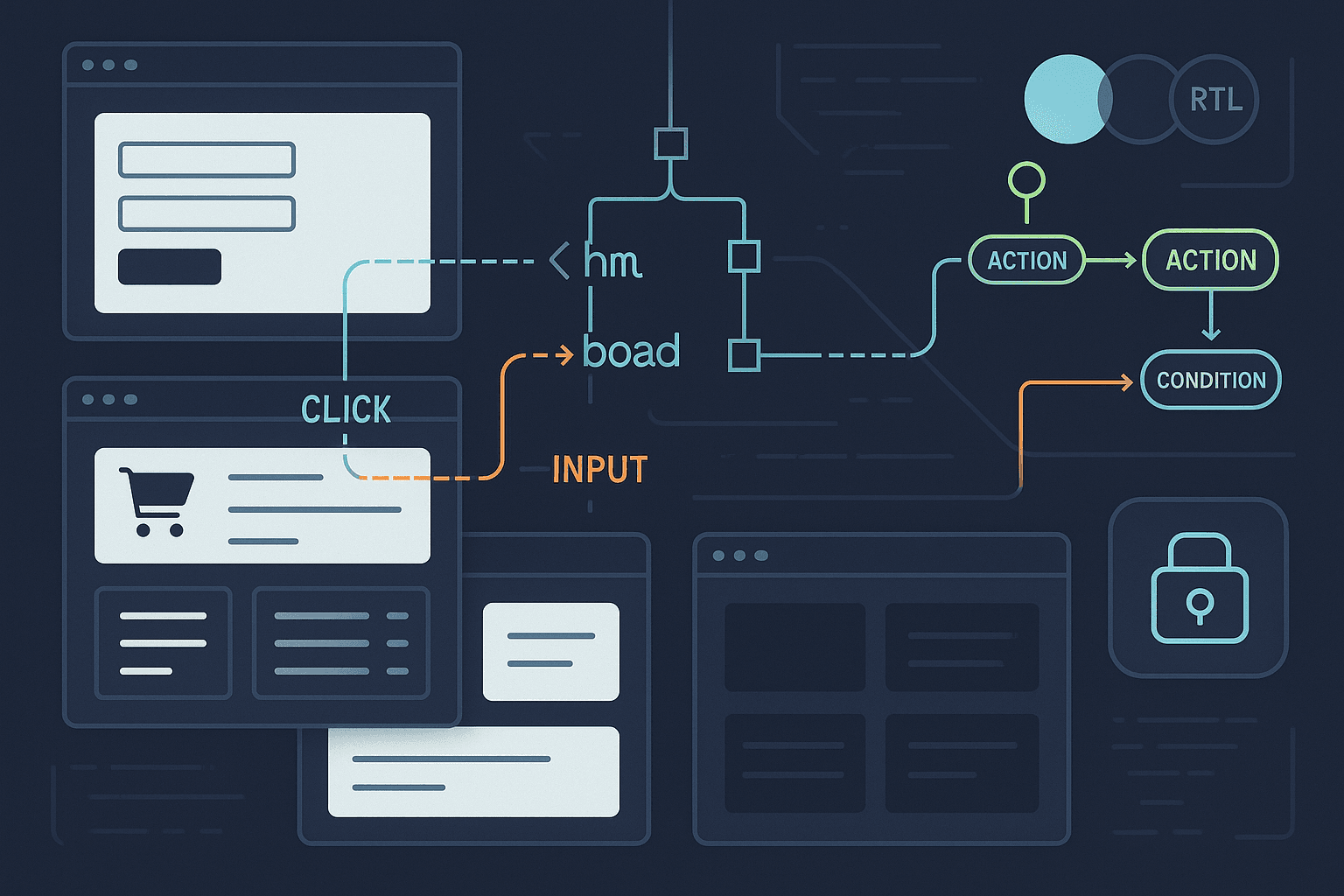

Architecture overview

A minimal synthetic web farm stacks these components:

- Generator: A TypeScript/Node tool that produces a website from a seed, using a component DSL and layout engine.

- Task embedder: Attaches one or more task DAGs into each website and injects a small runtime to expose the ground-truth API.

- Instrumentation layer: Hooks into DOM events, custom elements, and network requests to record semantically labeled traces.

- Skins and localization: A theming system (CSS variables + tokenized design system) and i18n strings to vary surface features.

- Snapshot packager: Builds a hermetic artifact (e.g., a tarball or WebBundle) with an offline service worker, CSP, and hash-locked assets.

- Harness: Playwright/CDP runner to execute episodes, gather rollouts, and run unit/integration tests.

- Training pipeline: RLHF preference data collection and off-policy evaluation using logged episodes with precise propensities and ground-truth rewards.

Procedural UI generation

At the core is a UI generator that composes components into routes with realistic data and behavior. We recommend:

- A component library with parametric factories: Form, Table, ProductCard, Cart, FilterPanel, ModalDialog, Toast, Tabs, TreeView, Calendar, FileUpload, RichText, DataGrid (virtualized), CustomElement (shadow DOM), and EmbeddedFrame.

- A layout engine that places components according to constraints and templates, then injects noise: additional wrappers, reordered DOM vs visual order, nested scrolling, sticky bars, and lazy-loading.

- A seeded pseudo-random number generator (PRNG) such as seedrandom to guarantee determinism.

Example: Component factory API in TypeScript

ts// ui/factories.ts import seedrandom from 'seedrandom'; export type RNG = () => number; export function makeRng(seed: string): RNG { const r = seedrandom(seed); return () => r.quick(); } export interface FormFieldSpec { kind: 'text' | 'email' | 'password' | 'number' | 'date' | 'select' | 'checkbox' | 'radio'; name: string; label: string; required?: boolean; options?: string[]; // for select/radio validators?: Array<{ rule: 'min'|'max'|'regex', arg: number|string }>; } export function FormComponent(fields: FormFieldSpec[], rng: RNG) { const id = Math.floor(rng()*1e6); const html = [ `<form data-gt-id='form-${id}' novalidate> ${fields.map((f, idx) => renderField(f, idx)).join('')} <button type='submit' data-gt-id='submit-${id}'>Submit</button> </form>` ].join('\n'); const script = ` (function(){ const form = document.querySelector("[data-gt-id='form-${id}']"); form.addEventListener('submit', (e) => { e.preventDefault(); // synthetic validation, emits ground-truth event channel const payload = {}; for (const el of form.elements) { if (el.name) payload[el.name] = el.type==='checkbox' ? el.checked : el.value; } window.__gt && window.__gt.emit('form_submit', { id: 'form-${id}', payload }); }); })();`; return { html, script }; } function renderField(f: FormFieldSpec, idx: number): string { const id = `${f.name}-${idx}`; const label = `<label for='${id}' data-gt-id='label-${id}'>${f.label}</label>`; switch (f.kind) { case 'text': case 'email': case 'password': case 'number': case 'date': return `<div class='field'><${f.kind==='number'?'input type=number':f.kind==='date'?'input type=date':`input type='${f.kind}'`} name='${f.name}' id='${id}' data-gt-id='input-${id}' /></div>` + label; case 'select': return `<div class='field'><select name='${f.name}' id='${id}' data-gt-id='select-${id}'>${(f.options||[]).map(o=>`<option value='${o}'>${o}</option>`).join('')}</select></div>` + label; case 'checkbox': return `<div class='field'><input type='checkbox' name='${f.name}' id='${id}' data-gt-id='check-${id}' />${label}</div>`; case 'radio': return `<fieldset data-gt-id='radio-${id}'><legend>${f.label}</legend>${(f.options||[]).map((o,i)=>`<label><input type='radio' name='${f.name}' value='${o}' data-gt-id='radio-${id}-${i}'/>${o}</label>`).join('')}</fieldset>`; } }

Shadow DOM and custom elements

Agents often break when content is inside shadow DOM. Include custom elements to force shadow-root traversal and slotting logic.

ts// ui/custom-elements.ts export const ShadowCounterElement = ` class XCounter extends HTMLElement { constructor(){ super(); this.attachShadow({ mode: 'open' }); } connectedCallback(){ const btn = document.createElement('button'); btn.textContent = 'Increment'; btn.setAttribute('data-gt-id', 'xcounter-btn'); const span = document.createElement('span'); span.textContent = '0'; span.setAttribute('data-gt-id', 'xcounter-value'); btn.addEventListener('click', () => { span.textContent = String(parseInt(span.textContent||'0')+1); window.__gt && window.__gt.emit('xcounter_inc', { value: span.textContent }); }); const root = this.shadowRoot!; const style = document.createElement('style'); style.textContent = ':host{display:inline-flex;gap:8px}'; root.append(style, btn, span); } } customElements.define('x-counter', XCounter); `;

Embedded frames

Sandbox agents with same-origin iframes that simulate cross-app flows. Keep them same-origin to remain hermetic but model cross-boundary interactions and focus-traps.

html<!-- ui/embedded-frame.html --> <iframe src='frame-content.html' sandbox='allow-scripts allow-same-origin' data-gt-id='embedded-frame'></iframe>

Ground-truth task graphs

Every generated site should come with a structured task set. Represent tasks as a DAG of nodes with:

- Preconditions: state checks or DOM predicates that must hold.

- Actions: canonical operations (click element X, type text Y in input Z, select option, drag A to B, scroll until visible, etc.).

- Effects: state transitions in a key-value store or page-level signals.

- Assertions: success conditions (DOM contains receipt number, cart total equals $N, etc.).

- Hints: optional semantic breadcrumbs (alt text, ARIA roles, labels) to gauge agent use of accessibility features.

A concise YAML schema works well and avoids brittle string parsing.

yaml# tasks/checkout.yaml version: 1 seed: 12345 meta: difficulty: 3 categories: [commerce, forms, cart] time_limit_ms: 90000 nodes: - id: start kind: precondition check: dom.exists('[data-gt-id="nav-cart"]') - id: open_cart kind: action op: click args: locator: css('[data-gt-id="nav-cart"]') - id: verify_cart kind: assertion assert: dom.textMatches('[data-gt-id="cart-title"]', /Your Cart/i) - id: proceed_checkout kind: action op: click args: locator: text('Proceed to checkout') - id: fill_form kind: action op: fill_form args: mapping: name: faker.name.findName() email: faker.internet.email() address: faker.location.streetAddress() - id: submit kind: action op: click args: locator: css('[data-gt-id="submit-*"]') - id: success kind: assertion assert: dom.exists('[data-gt-id="order-confirmation"]') edges: - [start, open_cart] - [open_cart, verify_cart] - [verify_cart, proceed_checkout] - [proceed_checkout, fill_form] - [fill_form, submit] - [submit, success]

Embedding task graphs into the page

At build-time, bundle the YAML (or JSON) with the site and expose a minimal runtime API under window.__gt. That API should:

- Emit task and event signals.

- Offer a canonical list of tasks and nodes with IDs.

- Validate assertions on-demand (for online reward) and in batch (offline replays).

ts// runtime/gt.ts export function installGroundTruth(tasks: any) { const listeners: Record<string, Function[]> = {}; const api = { tasks, emit: (type: string, payload: any) => { (listeners[type]||[]).forEach(fn => fn(payload)); // mirror in devtools channel window.postMessage({ __gt: true, type, payload }, '*'); }, on: (type: string, fn: Function) => { (listeners[type] ||= []).push(fn); }, assert: (id: string) => { // evaluate assertion node id against DOM; returns boolean + diagnostics // implementation elided for brevity return { ok: true, reason: 'not implemented' }; }, }; (window as any).__gt = api; }

Auto-labeling DOM and event traces

To train or evaluate without brittle heuristics, label everything that matters.

Stable semantic locators

- Assign every actionable element a data-gt-id and data-gt-role (e.g., button, input, link, table-row) from the generator.

- Compute a semantic locator hash from the accessible name (ARIA), role, and ancestry to survive skin changes.

- Expose a getSemanticLocator(el) function in the runtime that agents and loggers can call.

ts// runtime/semantic.ts export function semanticLocator(el: Element): string { const role = el.getAttribute('role') || el.tagName.toLowerCase(); const name = (el as HTMLElement).innerText || el.getAttribute('aria-label') || ''; const path = [] as string[]; let cur: Element | null = el; while (cur && path.length < 5) { path.push(cur.tagName.toLowerCase()); cur = cur.parentElement; } const raw = [role, name.trim().slice(0,64), path.join('>')].join('|'); // simple hash let h = 0; for (let i=0;i<raw.length;i++) { h = ((h<<5)-h) + raw.charCodeAt(i); h|=0; } return `sloc-${Math.abs(h)}`; }

Event capture

Instrument both from inside the page and externally via CDP/Playwright for redundancy. In-page capture gives semantic richness; CDP gives authoritative input and network timing.

- In-page: wrap addEventListener for click, input, change, keydown, submit; publish structured events to window.__gt.emit.

- CDP: use Page.addScriptToEvaluateOnNewDocument to ensure instrumentation executes before app code; subscribe to Input, Network, Runtime, and DOM events.

- Service Worker: log fetch events to associate resource loads with UI interactions.

Event log schema (pseudo-JSON)

json{ "episode_id": "ep-000123", "seed": "12345", "events": [ {"t": 10.2, "src": "agent", "type": "click", "locator": "data-gt-id=nav-cart", "success": true}, {"t": 10.3, "src": "dom", "type": "navigation", "url": "/cart"}, {"t": 12.0, "src": "agent", "type": "fill", "locator": "data-gt-id=input-email", "value": "a@b.com"}, {"t": 15.1, "src": "dom", "type": "__gt", "name": "form_submit", "payload": {"id": "form-88"}}, {"t": 15.3, "src": "net", "type": "fetch", "req": "/api/checkout", "status": 200} ] }

Note: Keep timestamps monotonic and origin-stamped (agent/dom/net) to enable precise counterfactual evaluation.

Skin randomization, layout perturbations, and accessibility

Domain randomization is essential for robustness. Vary presentation without changing semantics.

- CSS variables: Tokenize colors, spacing, elevation, radii, typography, and transitions. Provide multiple themes (light, dark, high-contrast, corporate, playful).

- Layout seeds: Randomize grid vs flex layouts, sidebars left/right, modal vs inline forms, card vs list views.

- Responsive shifts: Test mobile breakpoints, density modes (compact/comfortable), and nested scrolling containers with sticky headers.

- Localization: Provide en, es, de, fr, ar (RTL) with realistic plural rules; ensure accessible names persist.

- Adversarial tweaks: Visual order ≠ DOM order; off-screen render with CSS transforms; long labels; duplicate link texts with different targets.

Example: theme tokens and alternate skins

css/* tokens.css */ :root { --bg: #101418; --fg: #e6edf3; --muted: #9fb1c1; --accent: #6cf; --accent-2: #a6f; --danger: #f66; --radius: 8px; --space-1: 4px; --space-2: 8px; --space-3: 12px; --font: Inter, system-ui, sans-serif; } [data-skin='corp'] { --accent: #0a84ff; --radius: 4px; } [data-skin='hc'] { --bg: #000; --fg: #fff; --accent: #ff0; filter: contrast(1.2); } [dir='rtl'] { direction: rtl; }

Hermetic snapshots for reproducibility

A hermetic snapshot is a sealed, self-contained package of a site, its assets, and a runtime enforcing determinism and offline behavior. The goal is bit-wise reproducibility and environment portability.

What to freeze

- Assets: HTML, JS, CSS, fonts, images, wasm. Store with content hashes.

- Data: Initial app state (localStorage/IndexedDB seeds), mock server responses, fixture catalogs (products, users, etc.).

- Time: Replace Date.now and performance.now with a seeded, controllable clock.

- Randomness: Override Math.random and crypto.getRandomValues with a seeded PRNG.

- Network: Service Worker intercepts all fetch/XHR and serves from local fixtures.

- Security: Strong CSP to disallow external connections; Subresource Integrity (SRI) for all scripts/styles.

Snapshot structure

site-000123/

index.html

assets/

app.hash.js

vendor.hash.js

styles.hash.css

fonts/

data/

fixtures.json

tasks.yaml

runtime/

sw.js

gt.js

semantic.js

manifest.json

manifest.json example (pseudo-JSON)

json{ "id": "site-000123", "seed": "12345", "version": 1, "entry": "/index.html", "hashes": {"/assets/app.hash.js": "sha256-..."}, "csp": "default-src 'self'; script-src 'self'; style-src 'self' 'unsafe-inline'" , "skins": ["default", "corp", "hc"], "locales": ["en", "es", "fr", "de", "ar"], "gt_tasks": "/data/tasks.yaml" }

Deterministic clocks and RNGs

Install a bootstrap script that runs before the app, seeding Math.random and overriding time APIs.

ts// runtime/determinism.ts import seedrandom from 'seedrandom'; export function installDeterminism(seed: string) { const rng = seedrandom(seed); const t0 = Date.UTC(2023,0,1); let dt = 0; (Math as any).random = () => rng.quick(); const origNow = Date.now; Date.now = () => t0 + dt; (performance as any).now = () => dt; // advance deterministically on animation frame const raf = window.requestAnimationFrame.bind(window); window.requestAnimationFrame = (cb) => raf((ts) => { dt += 16; cb(ts); }); }

Offline service worker

The service worker should cache-bustlessly serve assets and intercept all fetches, mapping dynamic endpoints to fixtures.

js// runtime/sw.js self.addEventListener('install', (e) => { self.skipWaiting(); }); self.addEventListener('activate', (e) => { e.waitUntil(clients.claim()); }); self.addEventListener('fetch', (e) => { const url = new URL(e.request.url); if (url.origin !== location.origin) { return e.respondWith(new Response('blocked', { status: 451 })); } // Map API calls to fixtures if (url.pathname.startsWith('/api/')) { const key = url.pathname.replaceAll('/', '_'); return e.respondWith(caches.open('fixtures').then(async (c) => { const res = await c.match('/data/fixtures.json'); const json = await res.json(); const body = JSON.stringify(json[key] || { ok: true }); return new Response(body, { headers: { 'Content-Type': 'application/json' }}); })); } return e.respondWith(fetch(e.request)); });

Packaging format

- Tarball or zip: simplest to distribute; mount with a tiny static server.

- WebBundle (.wbn): single-file package of a web app; Chromium supports it behind flags; useful for sealed delivery.

- Container image: for end-to-end hermeticity including the server; heavier but simple to orchestrate.

Opinion: A tarball plus a 50-line Node static server is the right default. Add a Service Worker and tight CSP to prevent leaks. Avoid depending on browser-specific bundle formats until they are stable across major engines.

Harness: running snapshots via Playwright

ts// harness/run.ts import { chromium } from 'playwright'; import http from 'http'; import serveStatic from 'serve-static'; import finalhandler from 'finalhandler'; export async function runEpisode(dir: string, policy: (page: any) => Promise<void>) { // start ephemeral static server const serve = serveStatic(dir, { fallthrough: false, etag: false, dotfiles: 'allow' }); const server = http.createServer((req, res) => serve(req, res, finalhandler(req, res))); await new Promise<void>(r => server.listen(0, r)); const port = (server.address() as any).port; const url = `http://127.0.0.1:${port}/index.html`; const browser = await chromium.launch({ headless: true }); const ctx = await browser.newContext({ locale: 'en-US' }); const page = await ctx.newPage(); const events: any[] = []; page.on('console', msg => { if (msg.text().includes('__gt')) events.push({ t: Date.now(), src: 'dom', msg: msg.text() }); }); await page.goto(url); await policy(page); // your agent logic here await browser.close(); server.close(); return { events }; }

Training pipelines: RLHF and OPE

Your synthetic farm should serve two key training and evaluation modes.

RLHF (Reinforcement Learning from Human Feedback)

- Collect trajectories: Run a baseline policy (scripted, imitation, or early agent) across a task distribution. Record action sequences and outcomes.

- Preference labeling: Sample pairs of trajectories for the same task seed and ask human annotators to choose which is better (shorter, fewer errors, complies with instructions). Synthetic environments help constrain the UI vocabulary so labelers can be consistent and faster.

- Reward model: Train a reward model on trajectory features (action types, DOM context, error counts, final assertion success) and preference labels.

- Policy optimization: Optimize the agent against the learned reward, optionally mixing in ground-truth rewards available from task assertions for regularization.

Tips

- Use trajectory sketches: Summaries containing key states, DOM screenshots, and action diffs to speed up human labeling.

- Debias with skins: Ensure preference pairs use different themes/locales to discourage overfitting to a specific skin.

- Safety: Filter trajectories that exploit UI bugs (e.g., hidden dev backdoors) discovered in synthetic environments.

Off-Policy Evaluation (OPE)

OPE estimates the performance of a new policy using logged data from a different policy, avoiding expensive fresh rollouts. In web environments, importance sampling can be high-variance; synthetic farms allow precise logging of action propensities and state densities to apply stronger estimators.

- Logging policy: For each state s and action a, log π_b(a|s) (behavior policy probability) and the state descriptor (DOM hash, semantic locator set, and critical flags like focus/viewport).

- Reward: Compute per-step or per-episode rewards using ground-truth assertions and signals, not heuristics.

- Estimators: Implement weighted importance sampling (WIS), doubly robust (DR), and marginalized IS if you can factor the action space (e.g., locator selection × action type × argument).

Python sketch: doubly robust estimator over episodes

pythonfrom typing import List, Dict def dr_return(episodes: List[Dict], pi_new) -> float: numer, denom = 0.0, 0.0 for ep in episodes: w = 1.0 dr = 0.0 for t, step in enumerate(ep['steps']): s, a, r, p_b = step['state'], step['action'], step['reward'], step['p_b'] p_new = pi_new.prob(a, s) w *= p_new / max(p_b, 1e-8) q_hat = 0.0 # learned Q(s,a) from a critic trained on logs v_hat = 0.0 # learned V(s) from the same critic dr += w * (r - q_hat) + w * (q_hat - v_hat) numer += dr denom += 1.0 return numer / max(denom, 1e-8)

Crucially, your synthetic environment provides:

- Exact rewards with no delayed human review.

- Reliable propensities for behavior policies you control.

- Structured state descriptors (semantic locators, DOM hashes) to train critics.

Evaluation metrics and curricula

Metrics

- Task success rate: Fraction of episodes that satisfy the terminal assertion within budget.

- Normalized path length: Agent steps ÷ optimal steps (from the task graph).

- Action accuracy: Ratio of semantically correct actions among all actions.

- Recovery rate: Probability of success after a mistake.

- Robustness: Success under skin flips, locale changes, and layout perturbations unseen during training.

- Accessibility usage: Fraction of locator resolutions that use ARIA roles/names vs brittle CSS/XPath.

- Latency: Time to first correct action, time to completion.

Curricula

- Level 0: Single-step tasks (click a button with a unique label).

- Level 1: Short forms (2–3 fields, straightforward validation).

- Level 2: Multi-step flows (cart → checkout → confirm) with toasts and async delays.

- Level 3: Shadow DOM components, nested iframes, virtualized tables.

- Level 4: Adversarial skins, ambiguous labels, duplicate affordances, partial observability (scroll-to-reveal).

Construct curricula automatically from the generator seed space. Maintain disjoint seed splits for train/val/test to avoid leakage.

Pitfalls, anti-patterns, and validation

Common mistakes

- Leaky determinism: Forgetting to override crypto.getRandomValues or Date.now → non-reproducible episodes.

- Non-hermetic assets: External fonts/icons/CDNs break offline runs; lock everything under 'self'.

- Overfitting to data-gt-id: If agents learn to search for explicit ground-truth attributes, they will fail in the wild. Expose them only in logs or behind a training-only channel.

- Misleading success: Checking for string presence ('Success!') rather than a structural assertion (receipt number exists with valid format) invites spurious completions.

- Brittle locators: Tests that depend on exact CSS paths will shatter under skin flips; enforce semantic locators in your harness.

Validation strategies

- Snapshot diffing: Hash DOM subtrees (outerHTML normalized) after each action to detect nondeterminism across runs.

- Assertion fuzzing: Negate or perturb success predicates to ensure they are not vacuous.

- Skin flips in CI: Run the same episode under 3 skins and 2 locales; require consistent success.

- Cross-engine runs: Headless Chromium + Firefox (if possible) to catch engine-specific quirks.

Comparison to existing environments

- MiniWob++: Excellent for micro-manipulation tasks; lacks full-page complexity or theming.

- WebArena: Closer to open-web complexity; less hermetic; great for end-to-end benchmarks.

- Mind2Web: Valuable human demonstrations; not deterministic.

This blueprint combines the determinism and labelability of synthetic tasks with the diversity of real-world UI patterns via procedural generation and skins.

Implementation checklist

- Generator

- Component library with forms, tables, carts, modals, custom elements, iframes.

- Layout engine with seeded perturbations.

- Data fixtures with seeded faker.

- Ground-truth

- YAML schema and validator.

- Runtime API (window.__gt) with emit/on/assert.

- Instrumentation

- In-page event hooks; CDP/Playwright listeners; Service Worker logging.

- Semantic locator computation.

- Skins/i18n

- CSS variable tokens; multiple themes; RTL support; accessibility testing.

- Hermeticity

- Deterministic time/RNG; SW intercepts; CSP; SRI.

- Snapshot packager and manifest.

- Harness

- Static server; episode runner; screenshot/video capture; DOM hash recorder.

- Training

- RLHF preference tooling; OPE estimators; critics.

Example end-to-end flow

- Generate site: Use seed S=12345 to produce pages: home, products, cart, checkout; components include a shadow DOM counter and a virtualized table.

- Attach tasks: Checkout.yaml with DAG; additional bonus tasks like apply_coupon and update_quantity.

- Skin: corp + fr (French) locale; RTL flip disabled.

- Package snapshot: Tarball with manifest.json, SW, CSP, SRI.

- Run harness: Baseline scripted policy runs 10 episodes; logs events and ground-truth assertions.

- Label preferences: Human compares three trajectory pairs; trains reward model.

- Train agent: Fine-tune with RLHF; evaluate with OPE using WIS and DR.

- Validate: Flip to high-contrast theme and de locale; ensure success holds within 5%.

FAQ

Q: How do I prevent agents from cheating by reading window.__gt directly?

- Provide a training-only build with __gt exposed and a deployment build with __gt gated behind a postMessage channel accessible only to the harness, not the page context. In evaluation, run with the hardened build.

Q: Can I include real third-party widgets?

- Only if you vendor them fully into the snapshot and disable any network calls via SW + CSP. Otherwise, you sacrifice hermeticity.

Q: How big should each snapshot be?

- Aim for <5 MB compressed per site. Prefer SVG icons, system fonts, and shared vendor bundles. Thousands of sites should fit comfortably in object storage.

Q: How do I verify determinism across environments?

- Re-run the same seed on different machines/containers; compare DOM subtree hashes and network logs. Differences must be zero or fully explained by known non-determinism gates (which should be eliminated).

Roadmap and extensions

- Richer widgets: calendars with range selection, data grids with inline edit, kanban boards, code editors (monaco) with intentional latency.

- Multi-tab tasks: require opening links in new tabs, switching focus, and coordinating cross-tab state.

- Keyboard-only mode: Force agents to navigate via tab/arrow/enter to learn accessibility pathways.

- Vision grounding: Provide pixel observations and DOM to test multimodal agents; randomize font rendering and antialiasing.

- Adversarial robustness: Auto-generate tricky layouts (overlapping hit targets, z-index traps) with known solutions.

- Bench-to-web transfer: Periodically evaluate on curated, permission-granted live sites to measure sim-to-real generalization.

Conclusion

Synthetic web farms are not a retreat from realism; they are a path to high-signal, scalable supervision. By combining procedural UI generation, embedded task graphs, rigorous auto-labeling, skin randomization, and hermetic snapshots, you create a laboratory where agents can actually learn to generalize. When integrated with RLHF and strong off-policy evaluation, you get both sample efficiency and trustworthy metrics. The result is a virtuous cycle: generate, train, evaluate, validate, and ship better browser agents faster and with fewer surprises.